This Generation’s AI Isn’t Intelligence — It’s an Interface.

And It’s Not AI That Will Replace You.

The Reality of AI: Somewhere Between Revolution and Bubble

Since 2022, I’ve held the same position in this newsletter: “AI is extraordinary and will change the world, but the bubble around it is massive.” Both things are true simultaneously. AI birthed Cursor — one of the fastest-growing companies in history. It gave us the Ghibli-fication trend sweeping social media. We all use Claude, Perplexity, or ChatGPT daily.

But in Silicon Valley, AI has essentially become a religion. Every startup slaps “AI” on their pitch deck. VCs won’t look at you without it. Elon Musk and Sam Altman keep breathlessly predicting that AGI will arrive this year or next and replace us all.

Let’s be clear about incentives here. AI is an extraordinarily capital-intensive business. The incentive for Altman, Dario Amodei, Musk, and others is to raise more money than competitors to build faster, bigger, newer. Inflating AI’s capabilities serves that goal. And if they’re wrong? They just move the goalposts: “Our definition of AGI is different,” or “We were talking about possibilities,” or “Next year for sure.”

These people are smarter than me. But when you factor in their incentives and actually analyze the data, this generation of AI has clear limitations. The hype and FOMO machine needs to be questioned. I’ve been writing about this for years — from my December 2022 piece on generative AI FOMO, to my July 2024 analysis of the AI bubble showing cracks, to my November 2024 deep dive on OpenAI’s dangerous gamble.

Recently, I’ve been wrestling with the question: what’s the most accurate way to describe this generation of AI? My conclusion: This generation of “artificial intelligence” isn’t intelligence. It’s an interface.

Current-Gen AI: A Very Convincing Interface Pretending to Be Smart

Large language models learn from massive datasets and excel at mimicking human language. On the surface, it looks like AI understands what we’re saying, reasons through problems, and delivers conclusions. But dig deeper, and it’s clear this isn’t true intelligence. What we’re actually looking at is a revolutionary new interface for accessing and utilizing the knowledge humanity has accumulated over centuries.

“But GPT-5 is way more accurate than GPT-4!” Sure. The technology is improving. But that improvement is happening within fundamental constraints that haven’t changed.

1. No Accuracy: AI That Hallucinates Lies

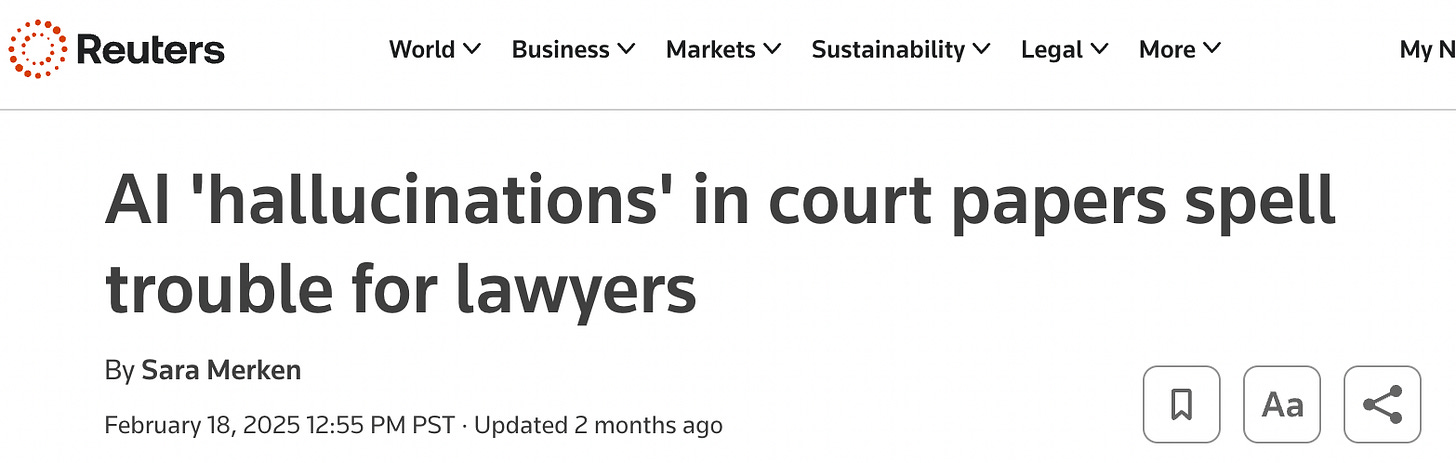

The most obvious limitation: AI regularly generates information that simply isn’t true. Remember the lawyer in 2023 who used ChatGPT to draft court filings filled with entirely fabricated case law and citations? That problem hasn’t gone away. It’s 2025 and we’re still dealing with the same issue.

In March 2025, a user asked ChatGPT for information about themselves. ChatGPT fabricated an elaborate story claiming the user was convicted of murdering two of their children and attempting to murder a third, sentenced to 21 years. The truly dangerous part? The hallucination incorporated real personal details — actual number of children, their genders, hometown — making the fiction eerily plausible and creating serious privacy and defamation risks.

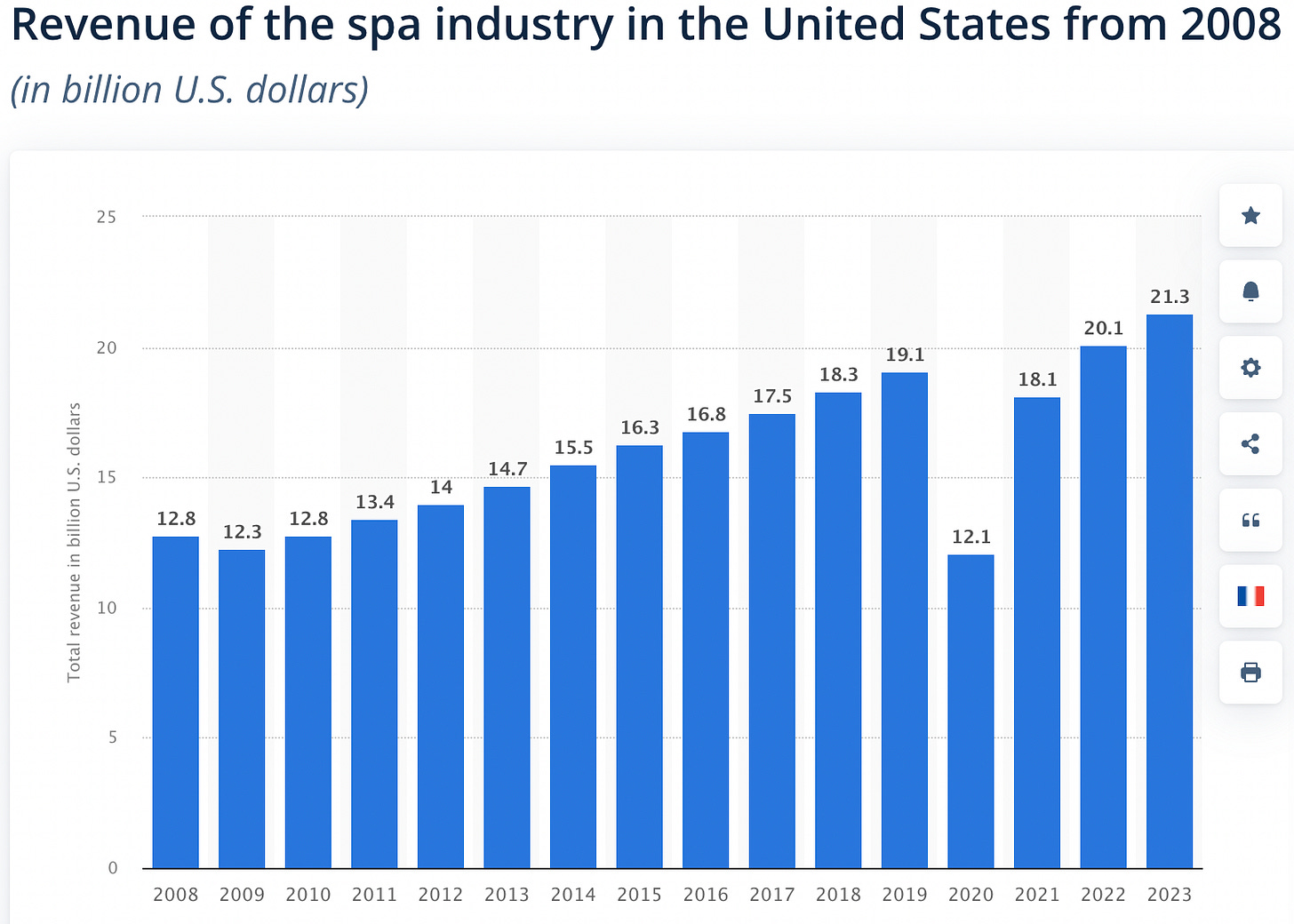

I suffer from this personally. Every time I use deep research, it pulls incorrect statistics with absolute confidence. Recently it told me the U.S. medical spa market grew from $1.1B in 2010 to $21.3B in 2023. Sounds plausible enough, and the AI presented it with such conviction I almost bought it. But when I checked the sources, the 2010 figure was wrong and the data wasn’t even about medical spas — it was about the general massage spa industry. I now assume every number AI gives me is wrong until I verify it manually. It also routinely fabricates URLs and misattributes quotes to real people.

This happens because AI generates text based on pattern recognition in training data. It produces fluent, confident prose that is frequently inaccurate or entirely fictional. This ability to sound authoritative while being wrong is the clearest evidence that AI isn’t understanding or judging content — it’s generating text based on statistical word associations.

2. No Creativity: It Can’t Think New Thoughts

AI models can mimic and remix existing styles from their training data, but they can’t produce the kind of original ideas that emerge from human emotion, intuition, and lived experience. Put it this way: AI can convert your photo into Ghibli style, but it could never have created Ghibli style.

Think about it carefully. When we ask AI to “create something humanity has never seen” or “imagine the unimaginable,” the output is ultimately derived from data that humans tagged as “unprecedented” or “never seen before.” It’s recombination, not creation.

AI can amplify human creative potential as a tool, but generating genuinely novel ideas remains beyond its reach. Canva’s 2024 report found that as AI automates routine tasks, uniquely human skills like creativity are becoming more valuable — designers and creators using AI tools are investing more time developing their own distinctive approaches, not less.

3. No Reasoning: It Searches for Answers, It Doesn’t Derive Them

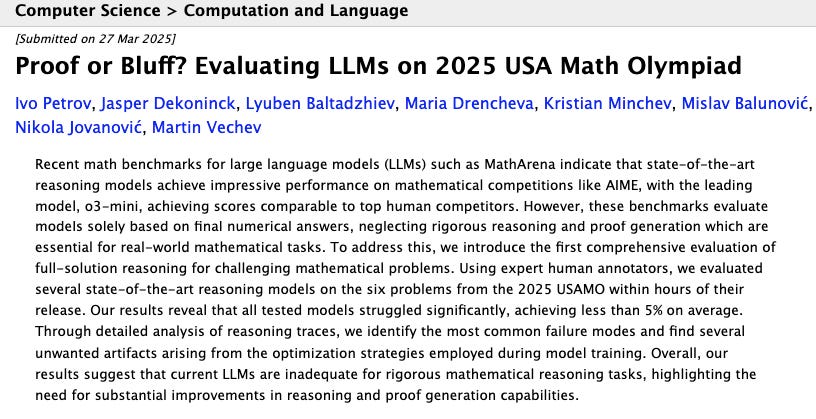

Here’s a fun one. In the 2025 USAMO (USA Mathematical Olympiad), cutting-edge reasoning models scored below 5% accuracy on average. This is striking because these same models had performed at near-human-expert levels on other math benchmarks.

What makes USAMO different? Two things. First, the problems were tested before they could appear in any training data — so the models couldn’t have memorized them. Second, the evaluation checked logical proofs, not just numerical answers. This revealed that current LLMs are fundamentally inadequate at rigorous mathematical reasoning, exhibiting logical errors, unjustified assumptions, and a complete absence of creative problem-solving.

Even better: OpenAI got caught pulling what people are calling a “Theranos move.” They announced that their o3 model achieved 25% accuracy on Epoch’s Frontier Math benchmark — crushing other models’ 2% scores. Impressive, right? Except it turned out OpenAI was an investor in Epoch’s Frontier Math and had access to the problems. And they reportedly restricted Epoch from disclosing this conflict of interest until after the o3 announcement. The market’s conclusion: that 25% result can’t be trusted either.

AI Won’t Replace You. But a Human Who Uses AI Well Will.

In 2015, Elon Musk said full self-driving was two years away. Geoffrey Hinton said radiologists would be obsolete within five years. It’s 2025, and radiologists are still driving themselves to work.

McKinsey’s 2024 report confirms what we’re seeing on the ground: while AI has been adopted across industries, it’s overwhelmingly being used to complement human workers, not replace them. In fields requiring complex judgment — medicine, law, education — AI helps with information retrieval and repetitive task automation, but final decisions remain firmly in human hands.

Between the lack of accuracy, imagination, and reasoning, I’m convinced this generation of AI cannot replace humans. But one thing is undeniable: AI is an exceptionally powerful interface.

AI Is the Interface Connecting Humanity’s Accumulated Knowledge

Current-generation AI isn’t an intelligence that thinks and judges independently. It’s a remarkably powerful interface connecting humans to the vast repository of knowledge we’ve built over millennia. Just as search engines helped us navigate the ocean of web pages, AI helps us access text, images, audio, and other forms of knowledge faster and more intuitively. It understands natural language input, interprets user intent, and reduces the steps needed to get from question to answer. It’s the ultimate interface.

History Belongs to Humans With Better Tools

This should be obvious, but it’s worth stating: given equal skill, the human with a steel sword beats the human with a stone axe. And even with less skill, the human with superior tools tends to survive. Nations with cutting-edge fighter jets and submarines hold advantages over those without. Better tools, better used, has always been the formula for winning.

Computers and the Internet: The Greatest Tools Ever Built

Computers and the internet are among the most powerful tools humanity has ever created. They’ve already fundamentally transformed how we live and work, and their influence will only grow.

The Winners of the AI Era Will Be Humans Who Use AI to Use Computers Better

AI is a new interface that makes these already-powerful tools — computers and the internet — dramatically more effective. It automates repetitive work, accelerates complex tasks, and lets us accomplish with a natural language command what used to require specialized technical knowledge.

The winners of the AI era won’t be the people developing AI. They’ll be the people who skillfully leverage AI as an interface to use computers better. AI won’t replace you. But a human who uses AI well will replace you. The competitive edge of the future isn’t about how well you can build AI — it’s about how effectively you can wield it.

This Is the Beginning of AI’s Golden Age

I believe technology advances in a staircase pattern. Big innovations create step-changes, and smaller innovations accumulate within each step. This generation of AI had its big step-change with GPT, and we’ve been watching smaller innovations stack up since.

This is exactly why I disagree with the idea that the explosive growth velocity of the past two years will simply continue straight to AGI. Breaking out of the current plateau requires another major step-change innovation, and as far as I can tell, that’s not yet on the horizon.

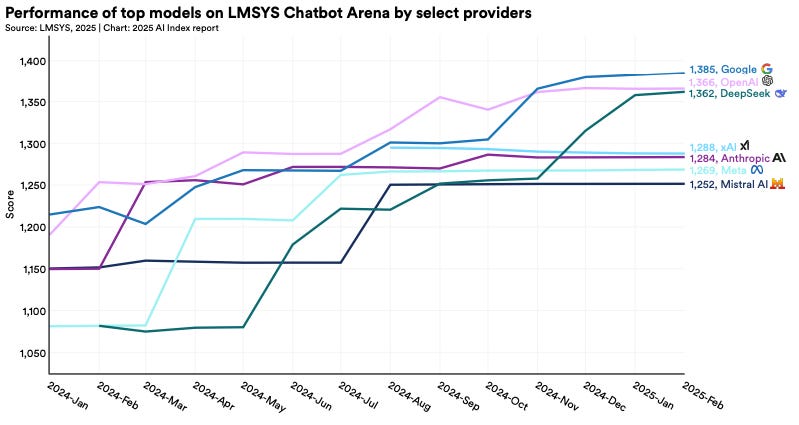

Stanford’s HAI 2025 report confirms this: AI performance improvement curves are flattening, entering a “convergence phase.” This signals we’re approaching the technical ceiling of the current architecture. But — and this is crucial — it also means the technology is stabilizing enough for practical applications to explode.

In this phase, the action shifts from breakthrough innovation to practical deployment and optimization. And historically, this is when technology creates the most economic and social value. Both the internet and mobile technology generated their greatest value during their respective convergence phases.

Which means right now is the perfect moment to build great products with AI, or to build tools that make building great products easier. If you’re a founder doing either, I’m always waiting to hear from you. (Fair warning: with so many Korean founders entering Silicon Valley lately and the events circuit going nonstop, my inbox is overflowing. I’m slow to respond and sometimes miss emails entirely. My apologies — please be patient with me.)

On a personal note, I’ve actually started building an app using vibe coding myself. I’ll write more about that experience soon and maybe find some people to build with. Stay tuned.

Thanks for reading, as always.

— Ian

So All the Successful VCs Are Stupid and Only You’re Smart?

…is the question I’m sure many of you are thinking, so I’ll just ask it myself.

Why do the smartest people in the world — VCs with the best networks — keep pouring money into deals at sky-high valuations that are likely to produce low returns? VC is a power law game where you find outliers through a contrarian approach. Everyone knows this. So why don’t they act like it?

Misaligned incentives. Simply put, VC — which is supposedly a “long-term investment” — also has a short-term performance pressure, and that pressure is called fundraising. (1) VCs need to raise a new fund every 2-3 years, and they need to show results fast. So even if they’re worried about long-term prospects, investing in whatever sector is hot and producing quick markups works in their favor — not just at the fund level, but (2) for individual performance and promotions, because it’s a cyclical game you can’t opt out of, and (3) when things go wrong, you get less blame if everyone else got it wrong too (e.g., “Sequoia made the same bet and it blew up too, so...”). I think this logic still holds.

I plan to write about this in more detail later, but when evaluating fund performance, you need to consider these incentive structures carefully. If you want high, repeatable VC returns over the long term, I believe the focus should shift from short-term returns and performance to team composition, strategy, and forward-looking vision. Understanding these less visible dynamics requires embedding yourself deeply in Silicon Valley’s local networks and breathing their culture from the inside. That’s something I feel more strongly about every day.

When co-investing with VCs in startups, don’t just look at the firm’s brand name — examine the current state of their fund and partners’ incentives. A lot of new partners and juniors have been hired/promoted recently, and funds with mediocre prior performance tend to chase hot sectors for short-term wins to justify their existence. The probability of reckless investments goes up, and their judgment on target companies can get clouded. Rather than thinking “they must know what they’re doing,” deeper due diligence is needed. (I’ve been noticing a few of these funds lately ㅎㅎㅎ.) So even though it’s tough in the short term, I’m going to keep studying hard and staying disciplined to become a VC that generates repeatable long-term returns.